Faisal Zain is a distinguished healthcare technology strategist with over 25 years of global experience in medical devices and digital transformation. Having held leadership roles at major firms like TCS and Cognizant, he currently focuses on accelerating health outcomes through the intersection of data, cloud infrastructure, and enterprise-scale AI. His work is centered on the critical challenge of scaling innovation while maintaining the rigorous safety standards required in clinical environments.

In this conversation, we explore the transition from isolated AI experiments to integrated enterprise architectures. We discuss the nuances of human-in-the-loop oversight, the automation of regulatory assurance, and the specific metrics—such as protocol adherence and intervention rates—that leaders must track to ensure clinical trust.

How do organizations transition from isolated AI pilots to modular, enterprise-wide architectures? What specific data governance foundations are necessary to ensure this scale does not introduce clinical risk, and how can these controls be measured during the initial rollout phase?

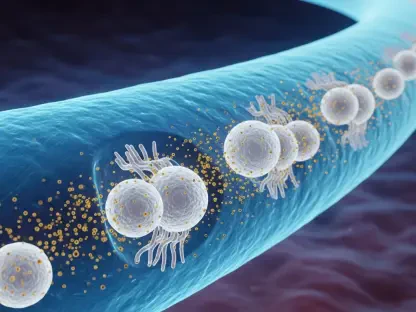

The transition requires a fundamental shift from viewing AI as a series of point solutions to treating it as a modular, enterprise-wide architecture where accountability is explicit at every layer. To achieve this, organizations must first establish a foundation of clean, unified data, as the performance of any clinical AI is only as reliable as the traceability of the information feeding it. We start by defining oversight frameworks before deployment, rather than retrofitting them, which allows us to move from reactive fixes to predictive care. During the initial rollout, we measure the effectiveness of these controls by tracking the intervention rate—the frequency with which a clinician must reject or correct an agent’s output—to catch model drift before it impacts patient safety.

When distinguishing between high-risk clinical decisions and administrative tasks, how do you define the thresholds for “human-in-the-loop” versus “human-on-the-loop” oversight? What specific criteria determine if an AI agent can execute a task autonomously or if it must escalate to a clinician?

We define these thresholds based on the reversibility and the clinical impact of the task at hand. “Human-in-the-loop” is our mandatory standard for high-risk or irreversible decisions, such as changing a patient’s medication, making a primary diagnosis, or approving high-value claims that dictate care access. In these scenarios, the AI cannot proceed without explicit clinician approval, or it must automatically escalate the case if its confidence score falls below a predefined safety level. Conversely, “human-on-the-loop” is reserved for lower-risk, reversible administrative workflows like documentation routing or scheduling, where the AI acts autonomously while clinicians monitor the outcomes through retrospective audits and dashboards.

Manual verification often slows down innovation in high-pressure medical environments. How can teams automate assurance workflows, such as performance qualification, directly within development pipelines? How does this approach specifically address administrative bottlenecks, like prior authorization, that contribute to provider burnout?

We address the “bottleneck” problem by embedding compliance checks directly into the DevOps pipeline, turning static checklists into continuous, automated oversight. By automating performance qualification and audit trails, we can release updates with the same rigor as manual documentation but at a fraction of the time. This is particularly transformative for prior authorizations, where 93 percent of physicians report that delays impact patient care and fuel burnout. Our autonomous agents perform “silent reviews,” synthesizing patient records and clinical context into draft summaries that clinicians can validate in a single click, reducing turnaround times from several days to just a few hours.

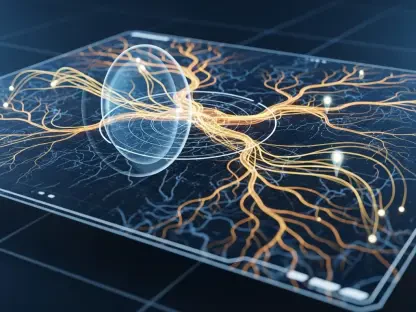

Generative AI is inherently probabilistic, yet clinical environments require predictable results. How do you translate high-level governance policies into deterministic engineering controls that prevent unauthorized actions? What role does unified data play in ensuring these safeguards function correctly at the point of care?

While Generative AI is adaptive and probabilistic, we surround it with deterministic engineering “guardrails” that act as an unbreakable execution layer. High-level policies are translated into hard-coded safety parameters; for example, an AI might generate a treatment suggestion, but a deterministic control prevents that suggestion from being entered into the record unless it meets specific safety conditions. This entire structure depends on unified data, which provides the necessary “source of truth” for the safeguards to verify. Without this data traceability, the engineering controls cannot accurately judge if the AI’s output is clinically sound or potentially hazardous.

To maintain clinical trust, what specific metrics should leaders track beyond simple cost savings? How do intervention rates and adherence to evidence-based protocols, such as NCCN guidelines, provide a clearer picture of model reliability and explainability than traditional throughput data?

Trust is built on transparency and clinical accuracy, not just speed or reduced overhead. We prioritize three specific metrics: the intervention rate, protocol adherence, and the explainability score. Protocol adherence is especially vital in fields like oncology, where we track how consistently the AI’s decisions align with established evidence-based standards like the NCCN guidelines to ensure the output is compliant, not just plausible. Furthermore, the explainability score forces the agent to cite the specific data source for every decision, providing the traceability that clinicians need to feel confident in the AI’s recommendations.

Moving governance from a final checkpoint to a leadership imperative requires a shift in budgeting and staffing. How should executives restructure their teams to prioritize enforceable guardrails? What are the practical steps to ensure that safety indicators are embedded directly into daily workflows?

Executives must stop treating governance as a secondary compliance task and start budgeting for it with the same urgency as model development itself. This requires a restructuring where safety and engineering teams work in tandem from day one to build enforceable guardrails directly into the clinical workflow. Practically, this means moving beyond adding extra review layers—which only creates alert fatigue—and instead focusing on “silent” integration where the AI handles the heavy lifting of data synthesis. By making safety indicators a part of the daily dashboard, leadership ensures that innovation and oversight advance at the same velocity.

What is your forecast for autonomous AI in healthcare?

I believe we are entering an era where AI will shift from being a “tool” to a “partner” that manages the 20 percent of global healthcare spending currently lost to waste and inefficiency. My forecast is that within the next five years, autonomous AI will handle the vast majority of administrative and routine diagnostic triaging, but its success will hinge entirely on the strength of our governance frameworks. We will see a move toward “invisible” AI that supports clinicians by removing the manual burden of documentation, allowing them to focus almost exclusively on complex patient care while the system maintains a constant, automated vigil over safety and protocol adherence.